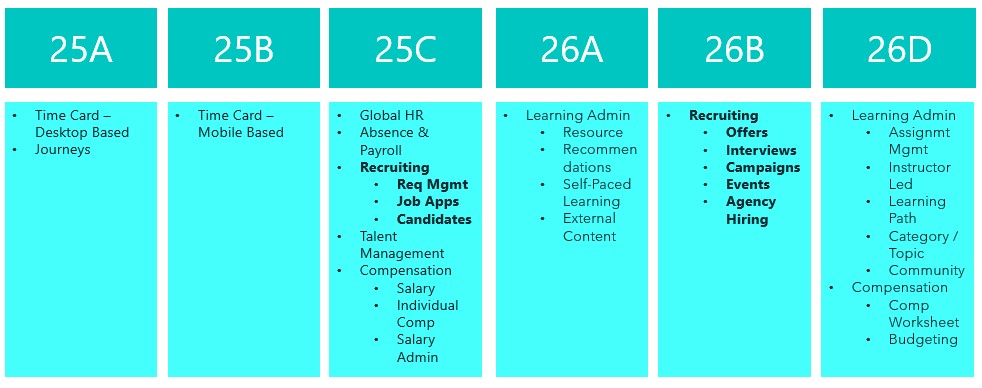

Today I had the opportunity to attend and present at a partner enablement event hosted by the Oracle AI Success Navigator product team, focused on how partners like Version 1 can best use Oracle’s tooling to bring genuine, measurable value to our customers. The session brought together presentations, product demos, hands-on labs, and open discussion, covering Oracle Cloud Success Navigator and Oracle Guided Learning (OGL). It was a useful day, and I wanted to share some of the key takeaways while they’re fresh.

If you haven’t come across Cloud Success Navigator yet, it’s Oracle’s digital engagement platform, provided free to Oracle Fusion Cloud customers, designed to help organisations design, implement, and accelerate their cloud and AI roadmaps. It sits at the centre of Oracle’s broader AI Factory offering, which Oracle launched as a bundled set of partner and customer services aimed at speeding up AI adoption.

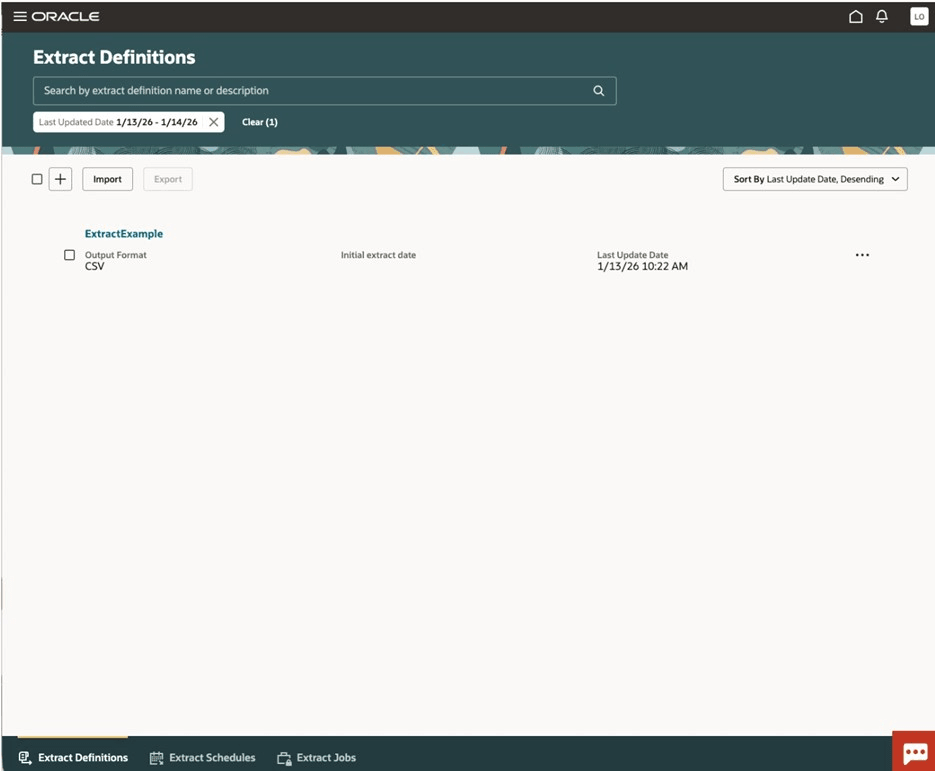

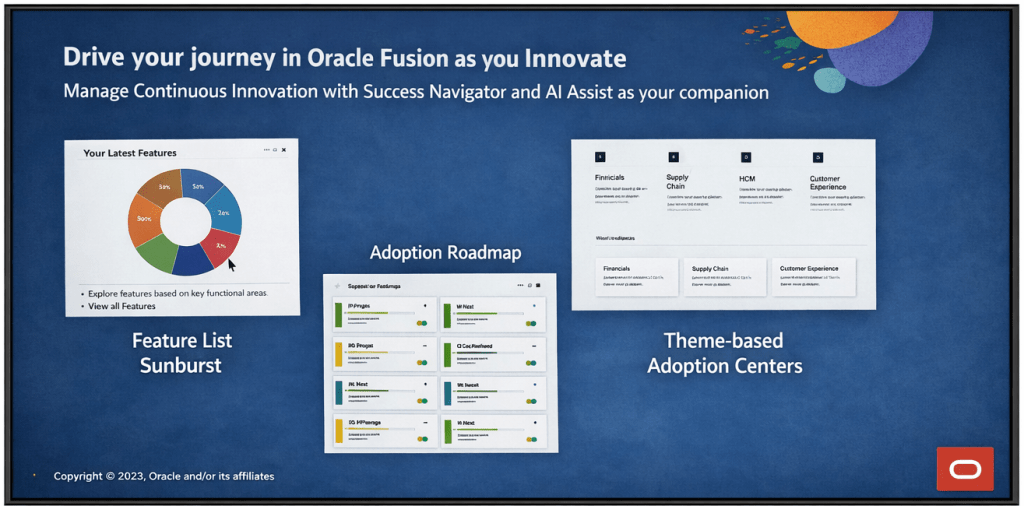

At its core, Cloud Success Navigator gives customers a single place to discover new features, plan adoption, track key milestones, and access Oracle Modern Best Practice (OMBP) guidance. The sunburst visualisation is particularly useful: it surfaces relevant features based on your production profile, so your team isn’t wading through capabilities that don’t apply to your configuration. You can tag features across Now, Next, and Later columns, which gives a clean, structured view of your innovation roadmap.

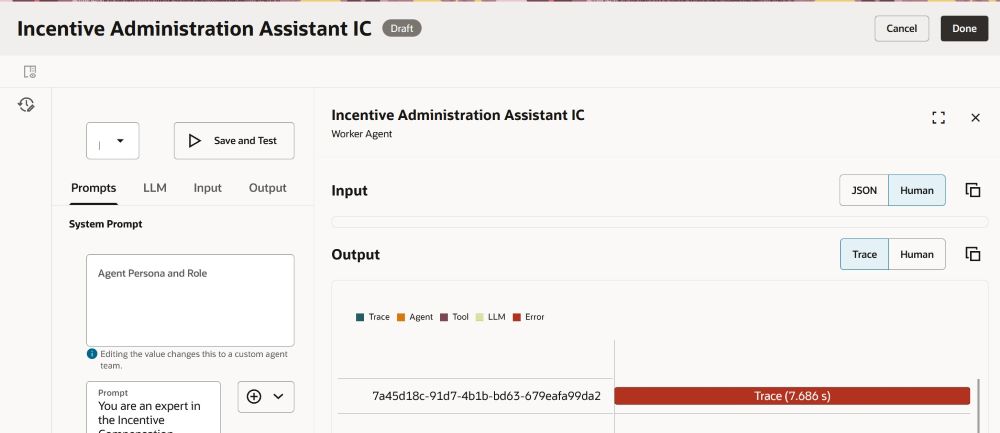

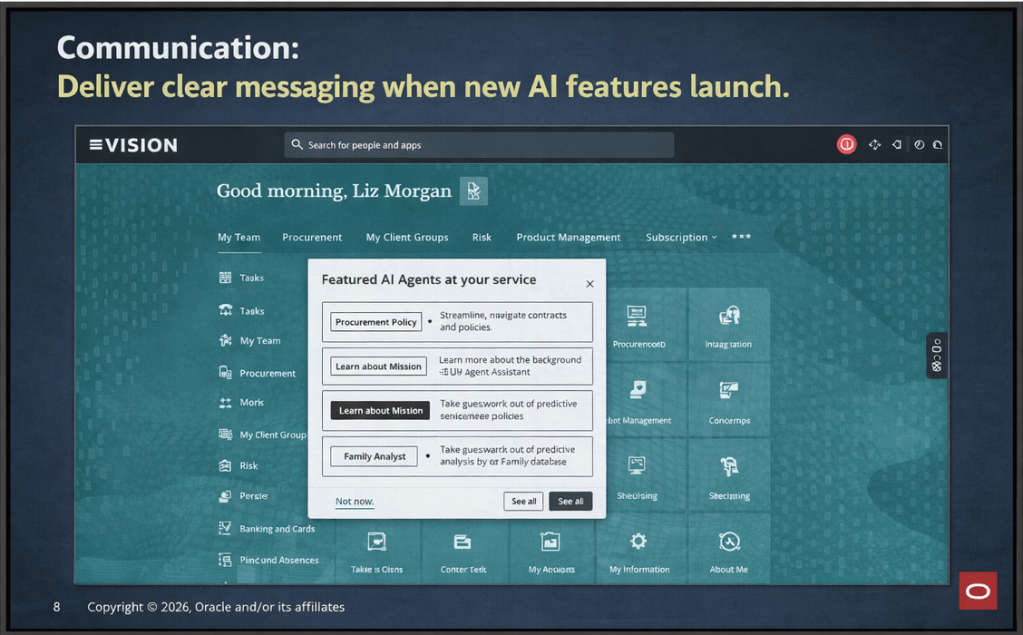

A significant addition to the platform is AI Assist, which was made generally available in late 2025. AI Assist is a generative AI-enabled assistant embedded throughout Navigator. It goes beyond a standard chatbot: it provides tailored recommendations, surfaces relevant documentation, highlights release roadmap changes based on your context, and flags project milestone risks. For partners, the practical implication is that our customers now have a self-service layer of intelligent guidance that can accelerate feature discovery and planning without always needing to raise a support request or wait for a consultant touchpoint.

How should Partners be using Success Navigator? This was, for me, the most valuable part of the day. The Oracle product team was clear that Navigator is not just a tool for customers to log into independently. The expectation is that partners should be actively bringing Navigator into their delivery model, whether that’s during implementation, post go-live optimisation, or ongoing managed service.

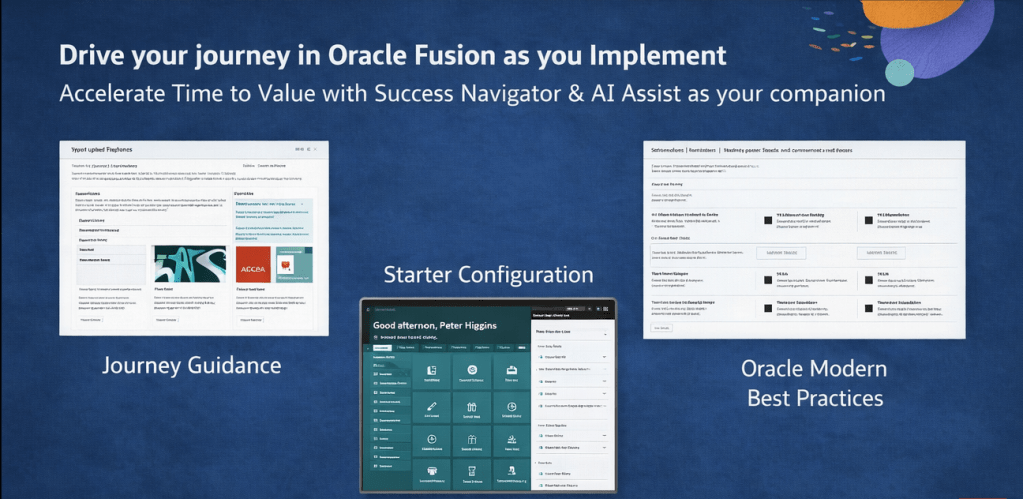

In practice, that means a few things. During implementation, your partner should be walking you through Navigator as part of onboarding, not treating it as a nice-to-have that gets mentioned at the end of a project. Feature planning sessions are more productive when they’re anchored in Navigator’s release data and OMBP content, rather than relying on spreadsheets or static documentation that goes out of date.

Post go-live, Navigator becomes a continuous value tool. The AI Assist agents can help customer teams stay ahead of quarterly release content, plan for Redwood migration milestones, and identify AI features that fit their production profile. Partners who are actively guiding their customers through this ensure their customers are in a much stronger position than those who are leaving customers to self-serve without direction.

One thing to note: Oracle has indicated that the platform continues to evolve, with enhancements planned around streamlined account management for customers with multiple accounts and improved programme management views. It’s worth keeping an eye on the in-application release announcements for Navigator itself.

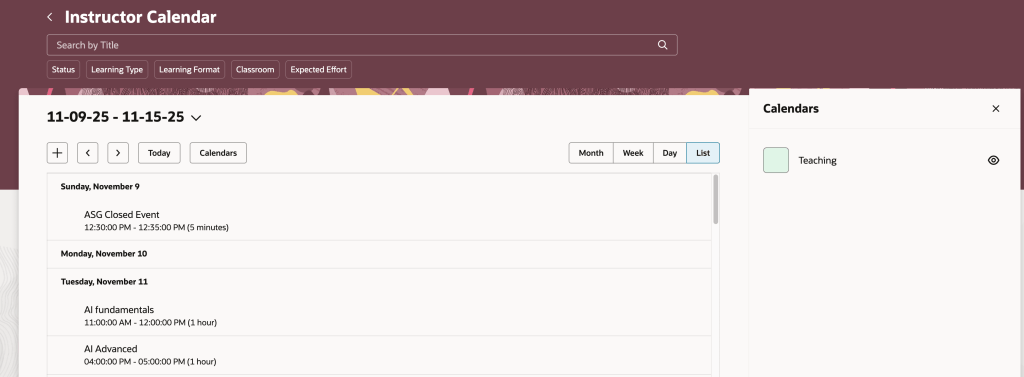

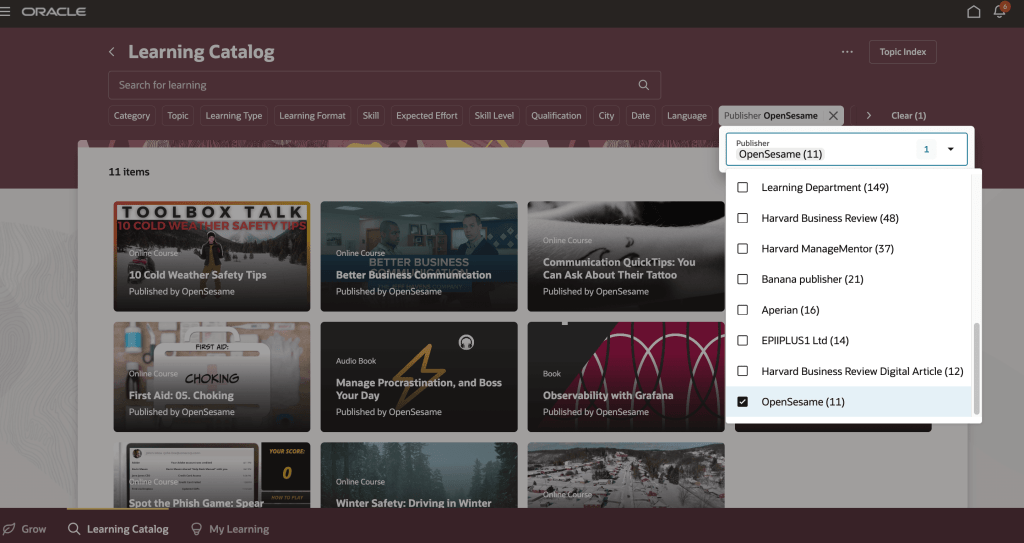

The second major focus of the day was Oracle Guided Learning (OGL), Oracle’s digital adoption platform (DAP) built natively for Oracle Cloud applications. OGL delivers in-application guidance, directly overlaid onto the Oracle Fusion interface, so users get real-time, contextual help without having to leave the system or refer to separate documentation. The core capabilities OGL brings to a customer environment are worth spelling out clearly, because I still encounter organisations that underestimate what the platform can do.

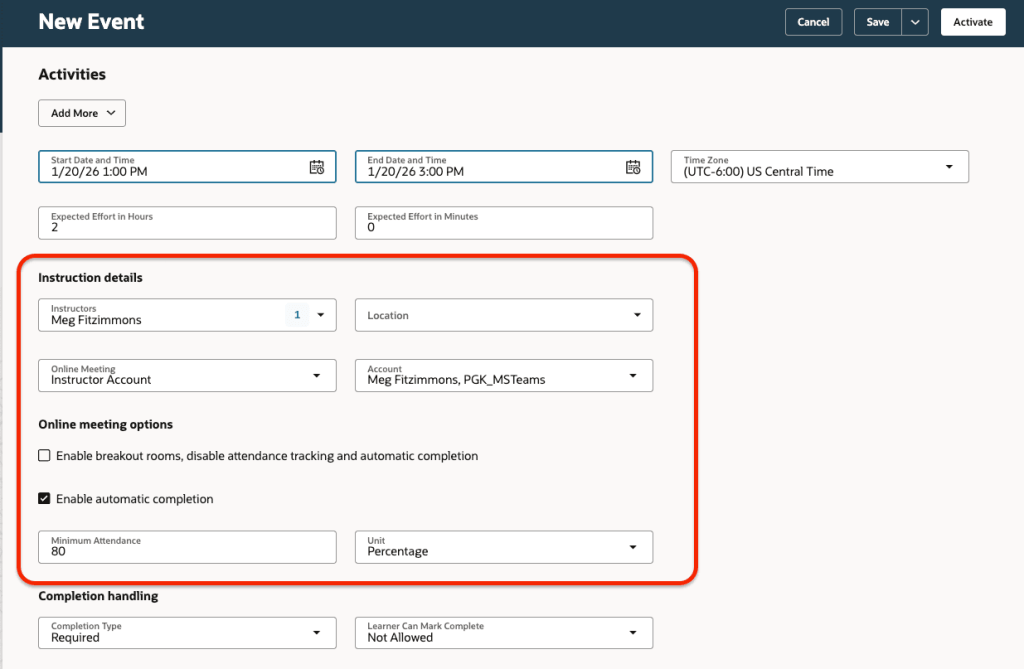

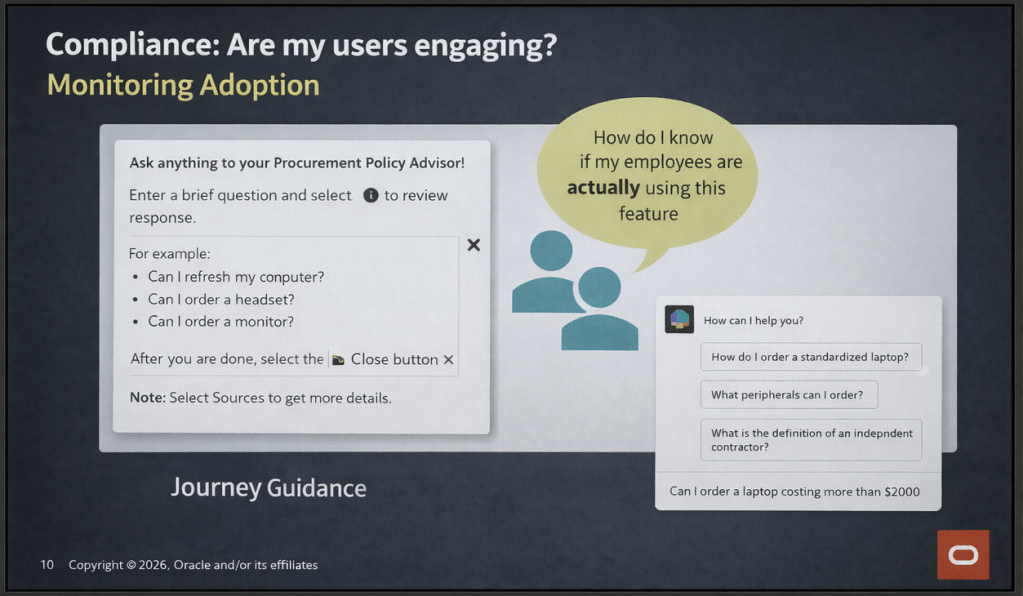

Process guides provide step-by-step walkthroughs for complex transactions, walking a user through the exact steps required to complete a task within the application. Smart tips and beacons offer contextual pop-up hints and visual cues at key points in the UI. The Help Panel gives users access to self-service guidance and documentation from within the application. In-app messaging allows administrators to send announcements, policy updates, and maintenance communications directly to users as they work, rather than relying on email campaigns that often go unread. Analytics then close the loop: OGL captures how users are engaging with content, where they’re dropping off, and which features or processes need additional guidance investment.

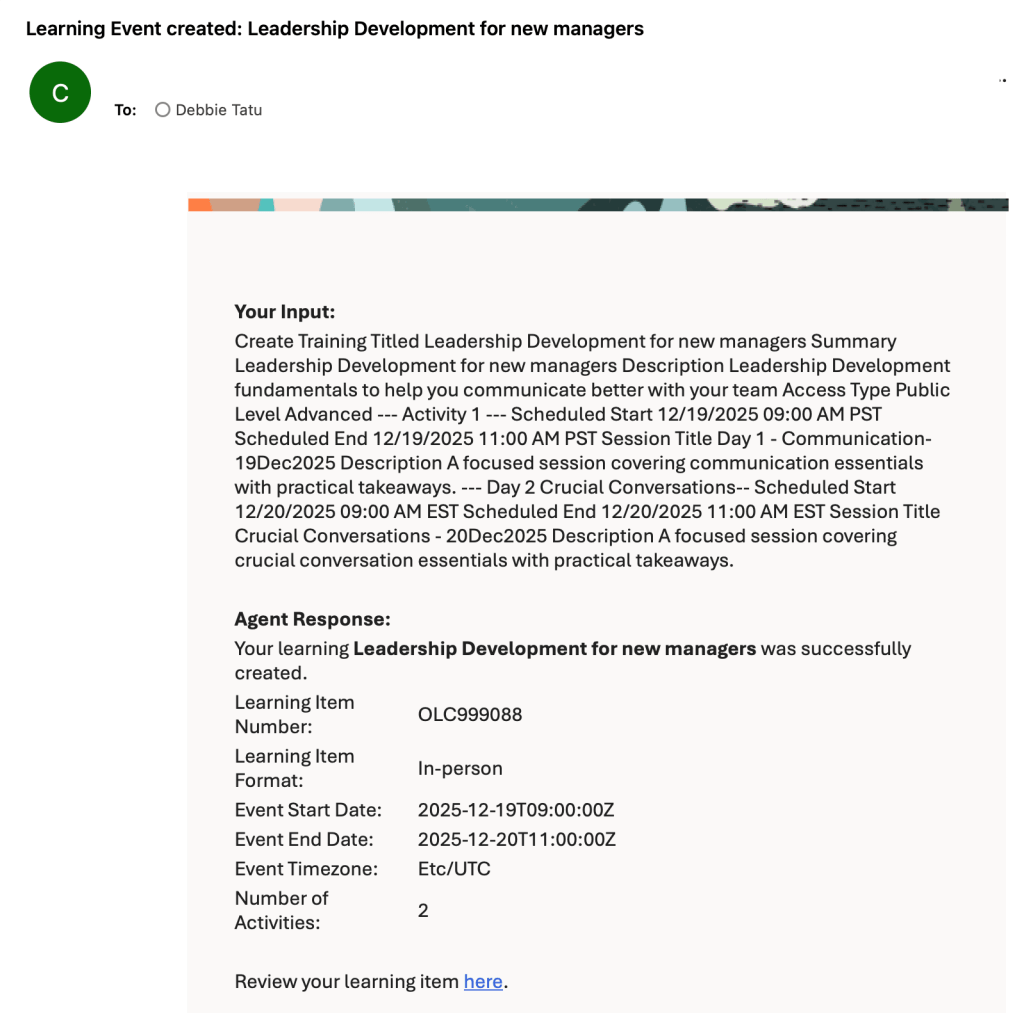

What’s particularly relevant for customers right now is the AI integration within OGL. The OGL 26A release introduced generative AI capabilities into the content authoring experience: content developers can use an AI assistant within the Full Editor to generate and rephrase step text for process guides, smart tips, beacons, and messages. This significantly reduces the time needed to build and maintain a library of guides, which has historically been a barrier to adoption on smaller or resource-constrained engagements.

OGL also extends beyond Oracle applications. It can be deployed across third-party applications including Salesforce, ServiceNow, Microsoft SharePoint, and others, which is useful context for customers running a mixed application estate.

A thread running through both topics today was change management, and it’s one that I think partners sometimes treat as a soft add-on rather than a structural part of delivery. The reality is that both Navigator and OGL exist precisely because technology adoption is a change management problem as much as a technical one.

Navigator gives you the roadmap visibility and planning structure to keep customers engaged with what’s coming and why it matters. OGL gives you the in-application mechanism to reinforce new behaviours, communicate changes, and support users at the moment of need. Used together, they cover a significant portion of the adoption lifecycle: from feature discovery and prioritisation, through to in-system guidance and analytics-driven optimisation.

The enablement message from Oracle today was straightforward: partners who embed these tools into their delivery model are better placed to demonstrate continuous value to customers. Customers who have a structured adoption programme, supported by Navigator and OGL, tend to see higher feature utilisation and lower support overhead than those who treat go-live as the end of the engagement.

It was a practical and well-structured day. The Oracle AI Success Navigator product team clearly has a strong vision for how the platform should be used within the partner ecosystem, and the investment Oracle has made in AI Assist and the broader AI Factory infrastructure is evident. For those of us working in Oracle Fusion Cloud implementations and managed services, the message is clear: these tools are available, they’re free as part of the Oracle subscription, and using them well is increasingly a differentiator in how we position value to our customers.

If you’re currently working on an Oracle Fusion Cloud engagement and you haven’t had a detailed look at what Cloud Success Navigator and OGL can offer, now is a good time to start that conversation.

Please note all screenshots are the property of Oracle and are used according to their Copyright Guidelines