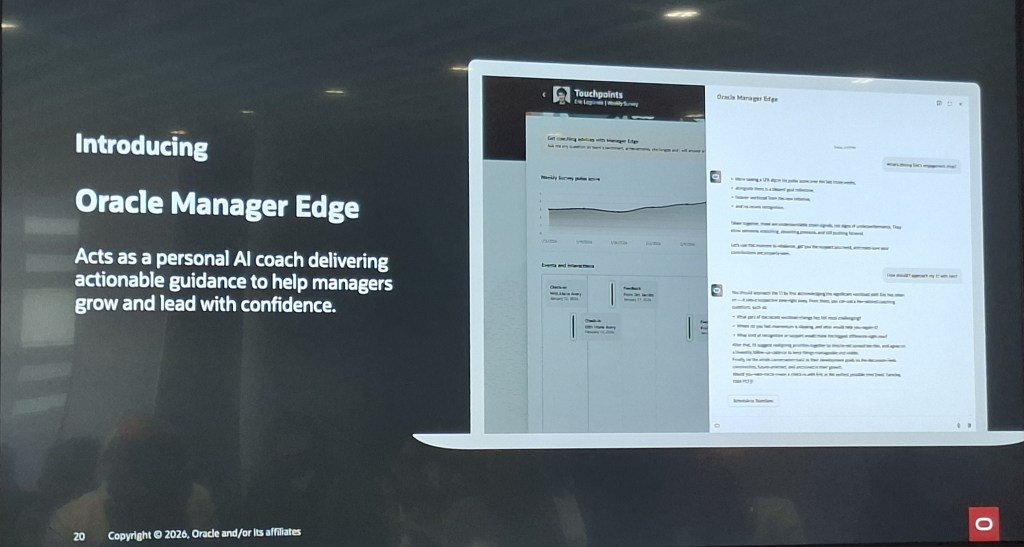

Oracle has been making a clear and increasingly consistent argument over the past few months: enterprise software has reached the limits of what a system of record can do. I’ve written before about the introduction of Agentic Applications at AI World London, and about the specific HCM applications that were announced alongside them. But there’s a broader story here that I haven’t fully explored yet, and it’s one that I think matters for every Fusion customer, not just those focused on HCM.

This post draws on the “Reinventing How Work Works” webinar, which stepped back from individual applications and made the architectural and commercial case for why the shift to agentic is happening, what it actually looks like in practice, and where Oracle is taking this next. If you’re trying to build internal momentum for agentic adoption, or if you’re trying to explain to a leadership team why this is different from previous AI announcements, this is the post to share.

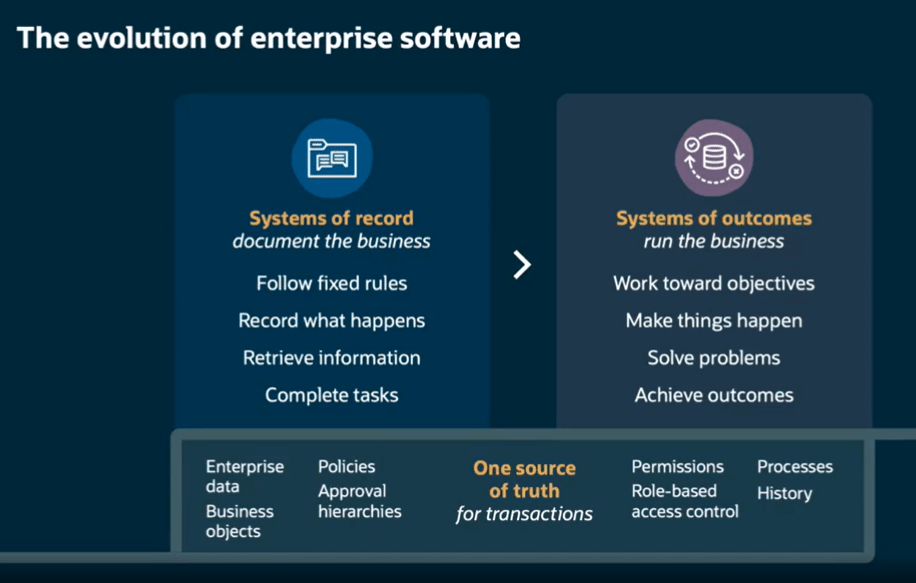

The framing that Oracle used throughout this webinar is, I think, one of the clearest explanations of what has actually changed. Traditional enterprise systems, including Fusion as it has historically operated, are systems of record. They follow fixed rules, capture what happened, retrieve information when asked, and complete transactions. They document the business. What they don’t do is run the business.

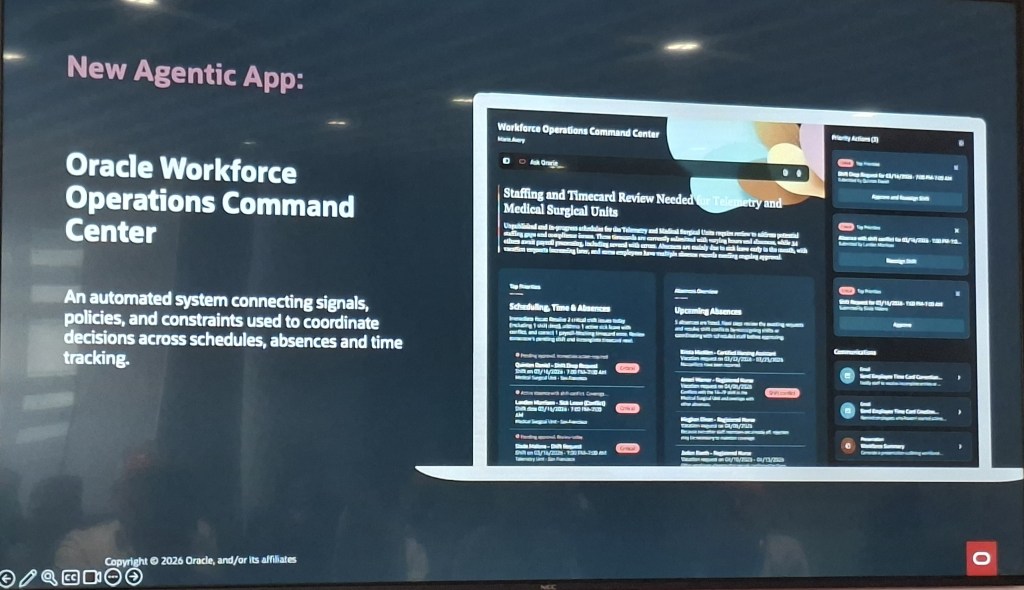

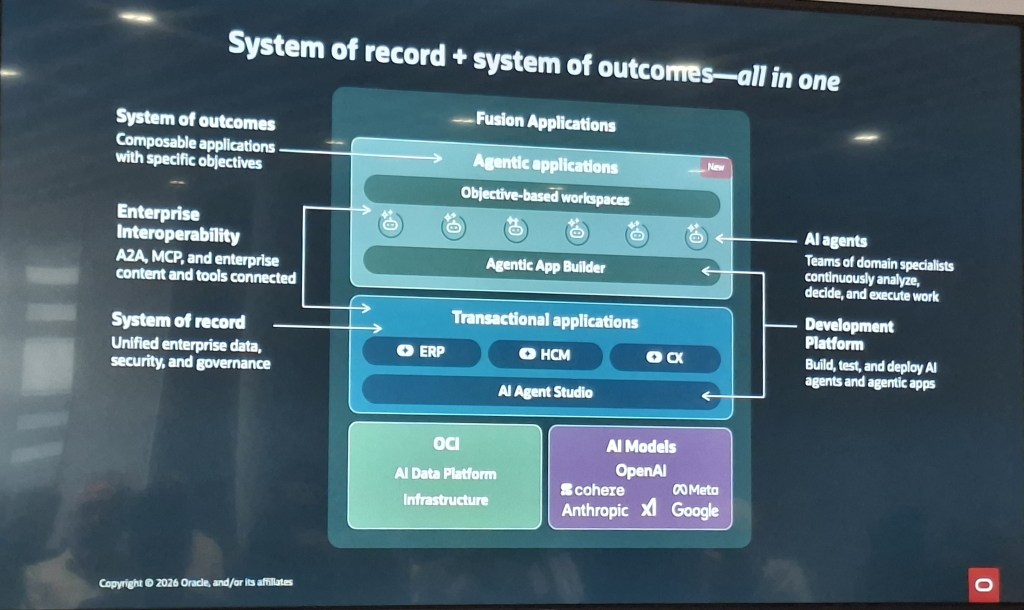

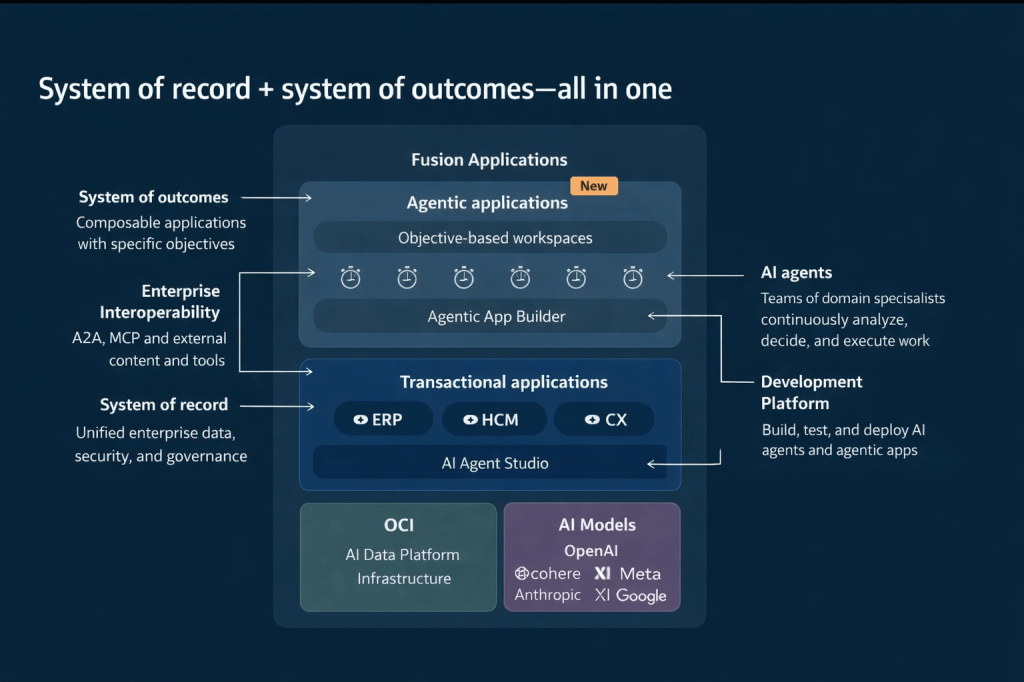

Agentic Applications represent a move to what Oracle calls systems of outcomes. Rather than waiting for a person to interpret data and decide what to do next, a system of outcomes works toward objectives, makes things happen, solves problems, and achieves results. The underlying system of record doesn’t go away. The data, governance, approval hierarchies, role-based access control, and audit history are still there, and in Oracle’s case, they’re still the source of truth for every transaction. What changes is the layer operating on top of that foundation.

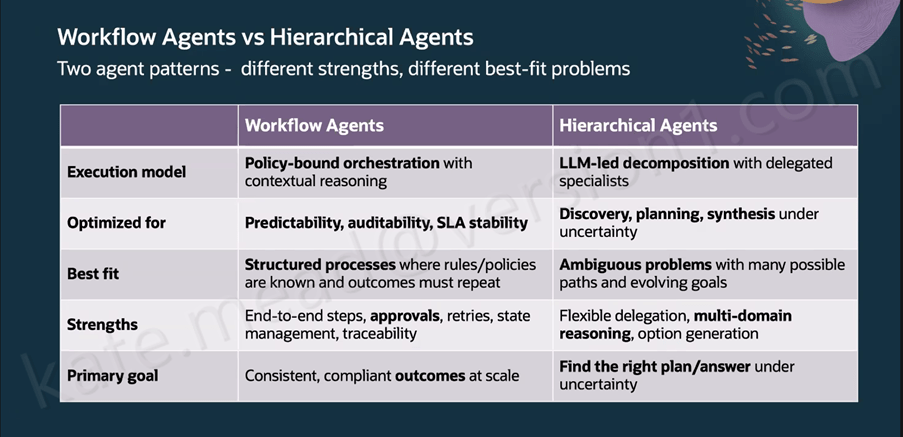

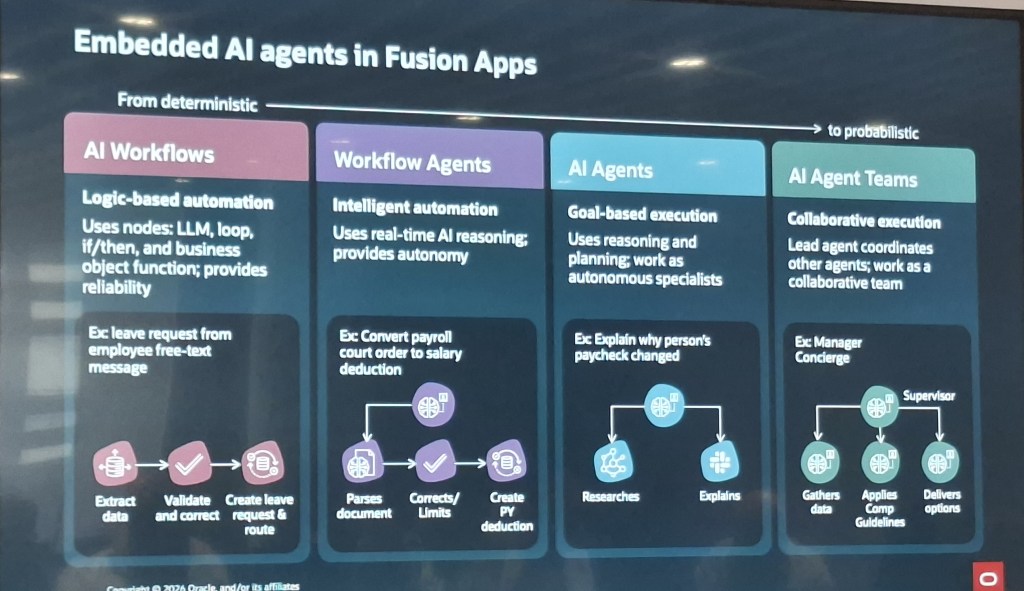

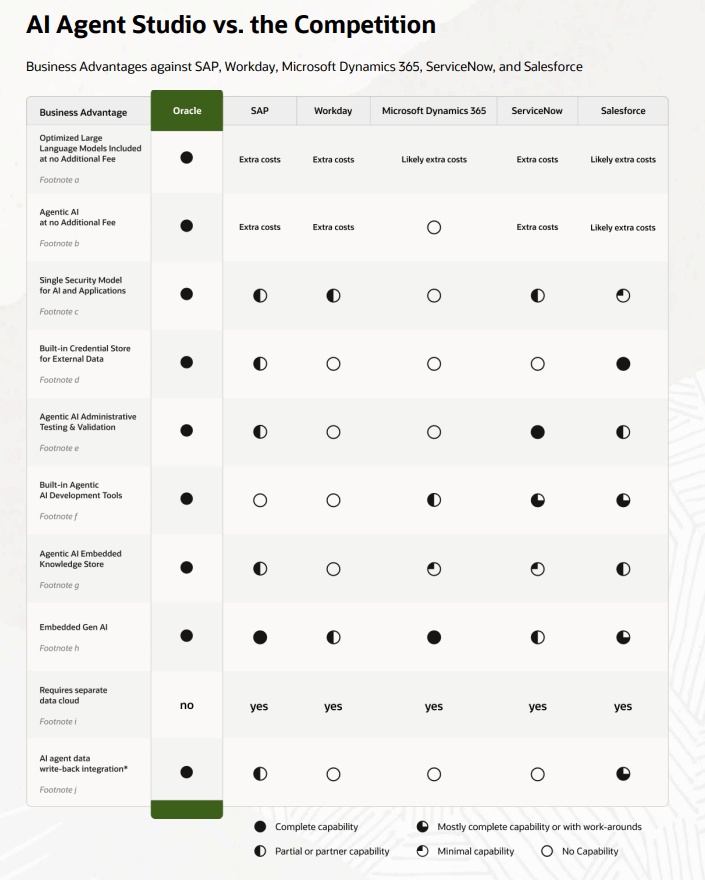

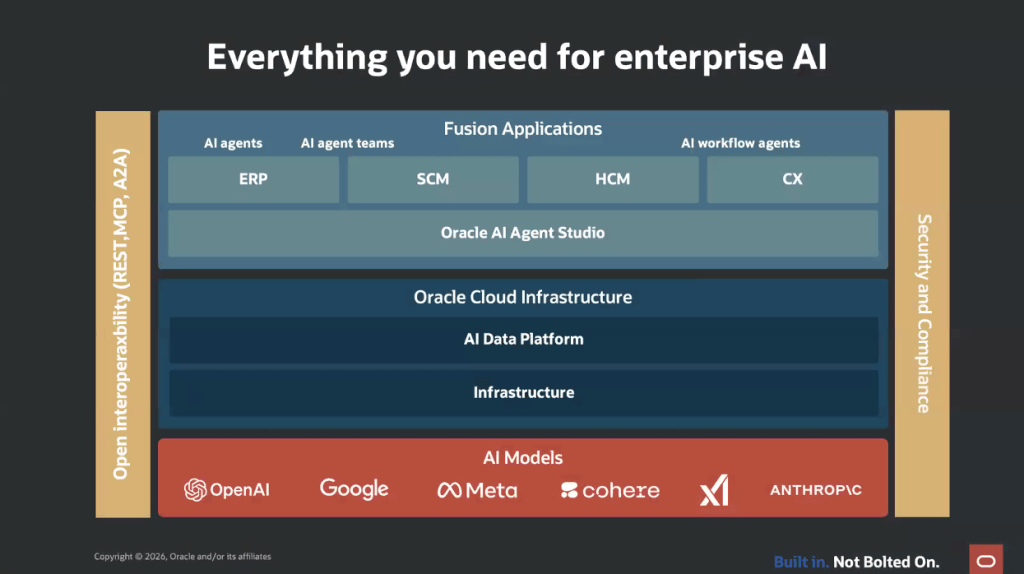

This architecture diagram is worth studying if you haven’t seen it. Agentic Applications sit in a new composable layer above the existing ERP, HCM, and CX transactional applications. That layer is powered by teams of AI agents coordinated through Oracle AI Agent Studio, drawing on the full enterprise data model, security model, and process history that already exists in Fusion. Beneath all of this, Oracle Cloud Infrastructure (OCI) provides the AI data platform, and a range of large language models (LLMs), including those from OpenAI, Cohere, Meta, Anthropic, xAI, and Google, are available depending on the task and preference.

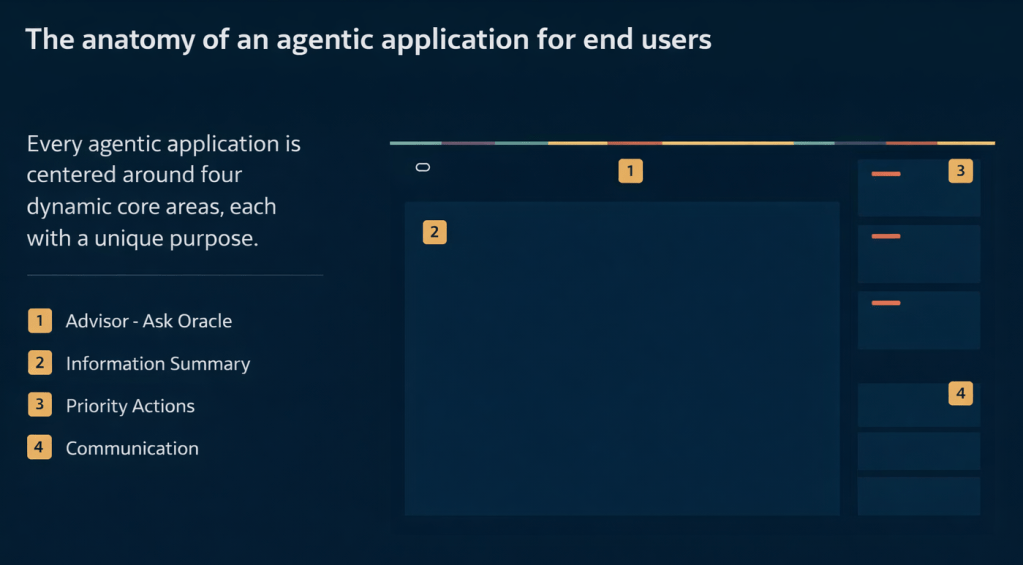

Every Fusion Agentic Application is built around four core dynamic areas. Understanding these is useful when you’re evaluating a specific application or explaining the concept to stakeholders.

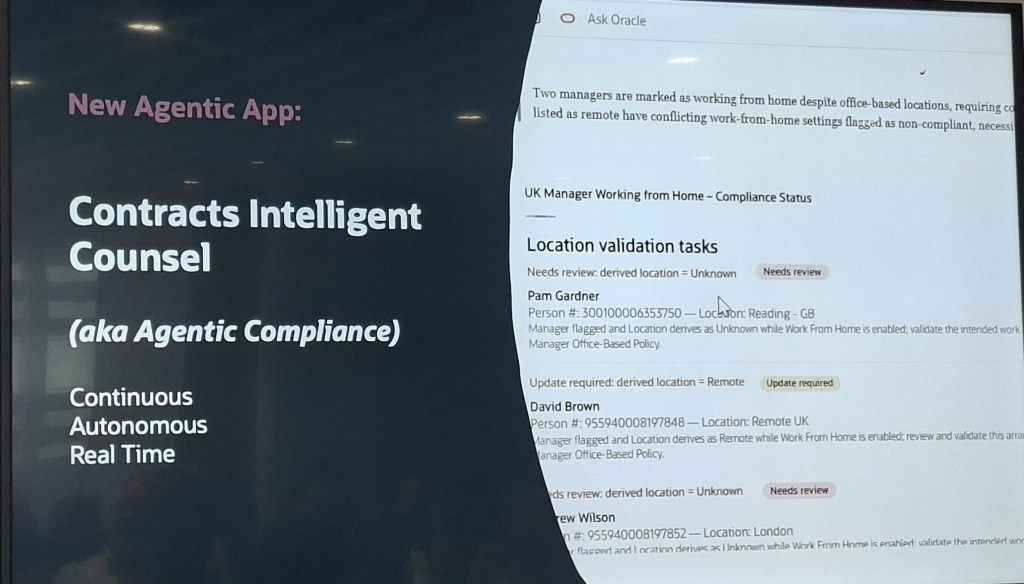

The first is the Advisor, which is the “Ask Oracle” conversational interface. This is where a user can ask natural language questions and get contextual, data-aware responses rather than navigating to a report. The second is the Information Summary, which provides an intelligent, prioritised view of what’s happening right now in that area of the business, surfaced automatically rather than requiring the user to run queries. The third is Priority Actions, a curated queue of recommended next steps that the agents have identified based on current conditions, risk signals, and business objectives. The fourth is Communications, which handles notifications, responses, and outbound actions within the appropriate governance boundaries.

These four areas appear consistently across all 22 applications, which is deliberate. Oracle’s position is that once a user understands the structure in one application, they can navigate any other agentic application without relearning the interface.

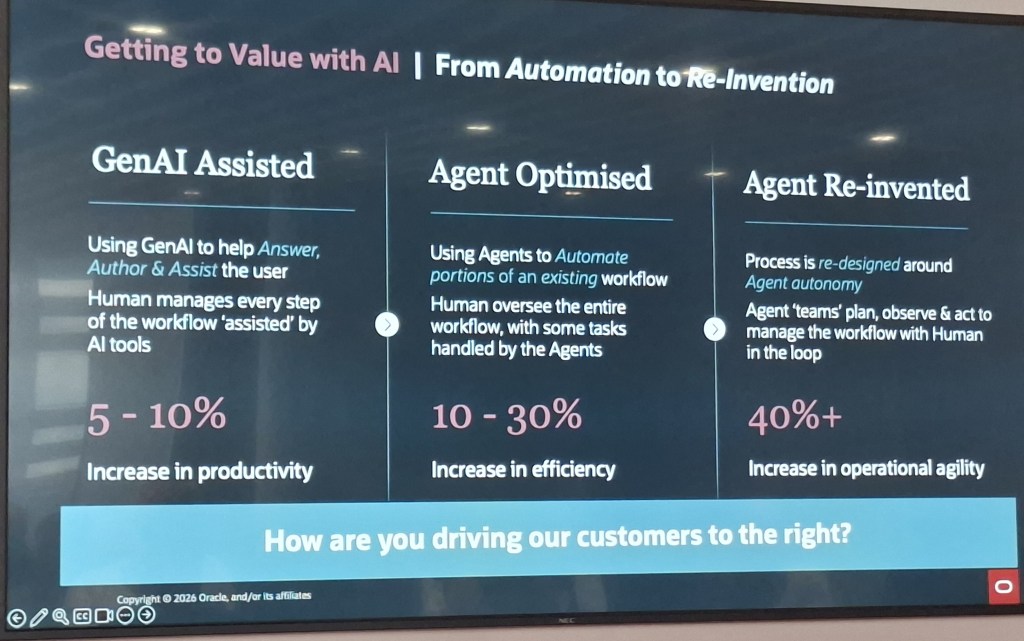

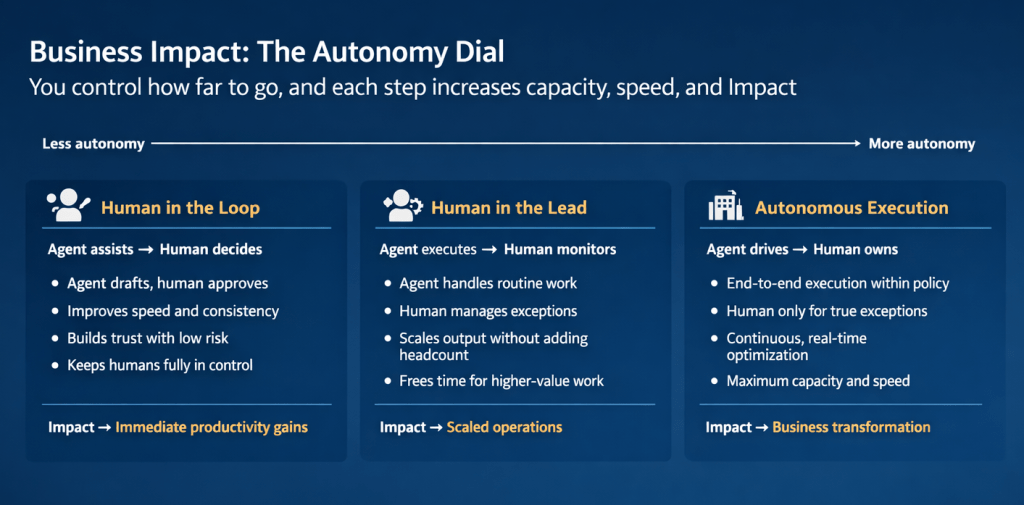

One of the most practically useful concepts introduced in this webinar is what Oracle calls the Autonomy Dial. It’s a spectrum with three positions, and it addresses one of the most common concerns I hear from customers and consultants: how much control do we give up?

At the “Human in the Loop” end, the agent assists and a person decides. The agent drafts, recommends, and prepares; the human reviews and approves. This builds trust, improves speed and consistency, and keeps people firmly in control. The business impact is described as immediate productivity gains.

In the middle is “Human in the Lead”, where the agent executes and a person monitors. The agent handles routine work and manages to policy; a person steps in for genuine exceptions. This scales output without adding headcount and frees teams for higher-value work. The impact here is scaled operations.

At the “Autonomous Execution” end, the agent drives and a person owns. End-to-end execution happens within policy, continuous real-time optimisation takes place, and human involvement is reserved for true exceptions. The impact is described as business transformation.

What I find compelling about this model is that it isn’t prescriptive. Oracle isn’t saying every organisation should start at one end or aim for the other. Each position on the dial represents a valid operating model depending on the process, the risk tolerance, and the maturity of the organisation. A payroll close process might comfortably sit at Human in the Lead. A workforce scheduling decision for a critical shift might warrant Human in the Loop until confidence is established. A high-volume procurement matching task might be a good candidate for Autonomous Execution relatively quickly.

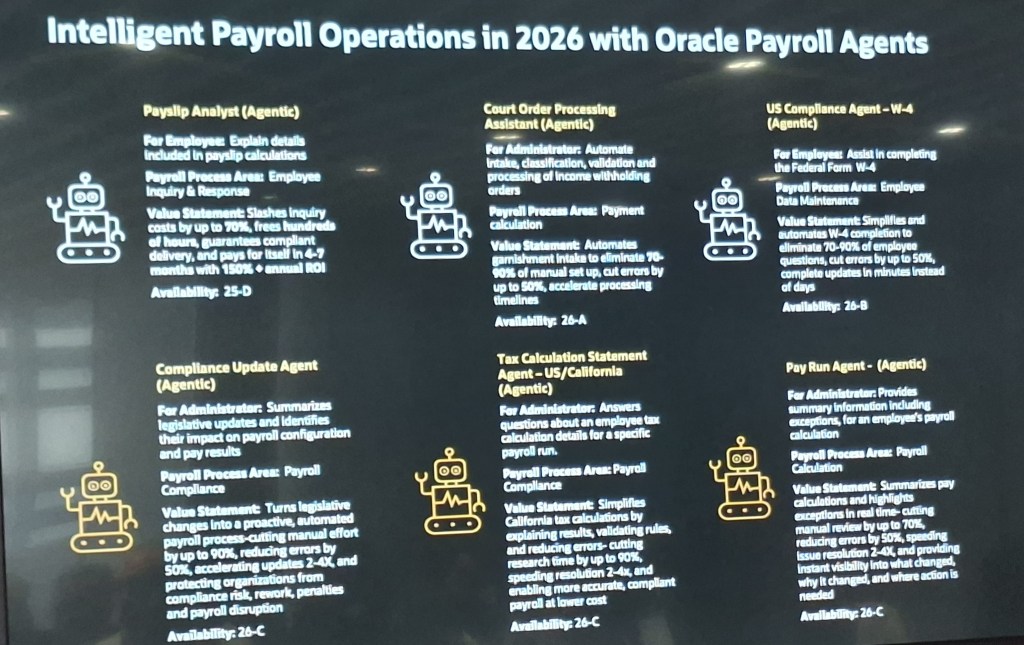

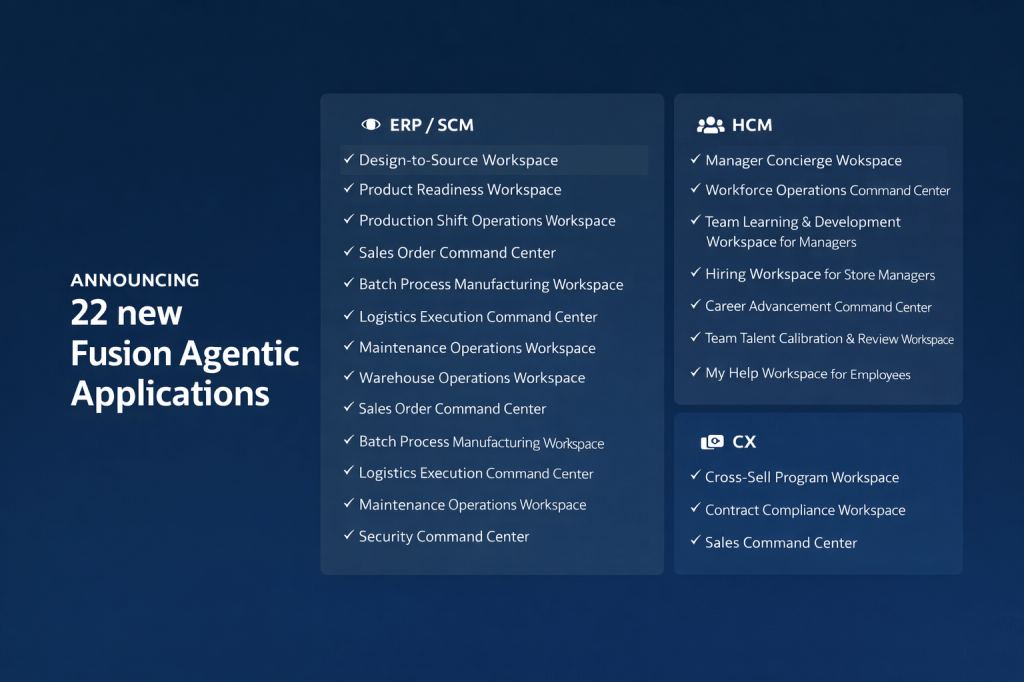

My earlier posts covered the eight HCM applications in detail. The full announcement of 22 applications in releases 26B / 26C spans ERP/SCM, HCM, and CX, and it’s worth understanding the breadth of this, because it signals how Oracle is positioning agentic across the entire Fusion suite rather than as an HCM-specific capability.

On the ERP and SCM side, the applications include Design-to-Source Workspace, Product Readiness Workspace, Production Shift Operations Workspace, Sales Order Command Centre, Batch Process Manufacturing Workspace, Logistics Execution Command Centre, Maintenance Operations Workspace, Warehouse Operations Workspace, Cost Accounting Close Workspace, Sourcing Command Centre, Collectors Workspace, and Security Command Centre.

The Design-to-Source Workspace is a useful example of the transformation logic. Previously, product design and bill of materials work happened in separate systems. Sourcing relied on items entered manually. Negotiation delays accumulated when information was missing or unresolved. With the agentic application, product specifications translate automatically into qualified supplier lists, bills of materials are generated directly from CAD files, at-risk negotiations are flagged automatically, and bids are evaluated across cost, lead time, quality, and risk in a single view. The outcome is faster time to market and improved sourcing cycle times.

On the CX side, three applications have been announced: Cross-Sell Program Workspace, Contract Compliance Workspace, and Sales Command Centre. For CX teams, the Sales Command Centre in particular brings together the kind of deal health monitoring, risk flagging, and next-step recommendation that previously required significant manual analysis across multiple reports.

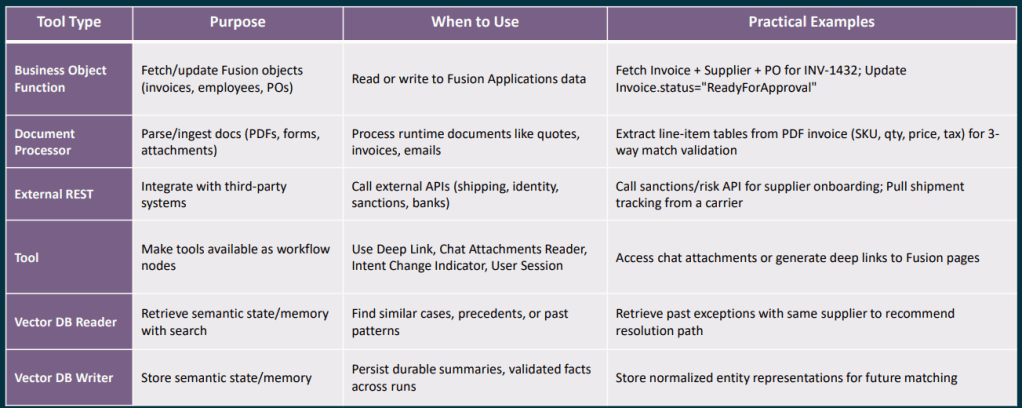

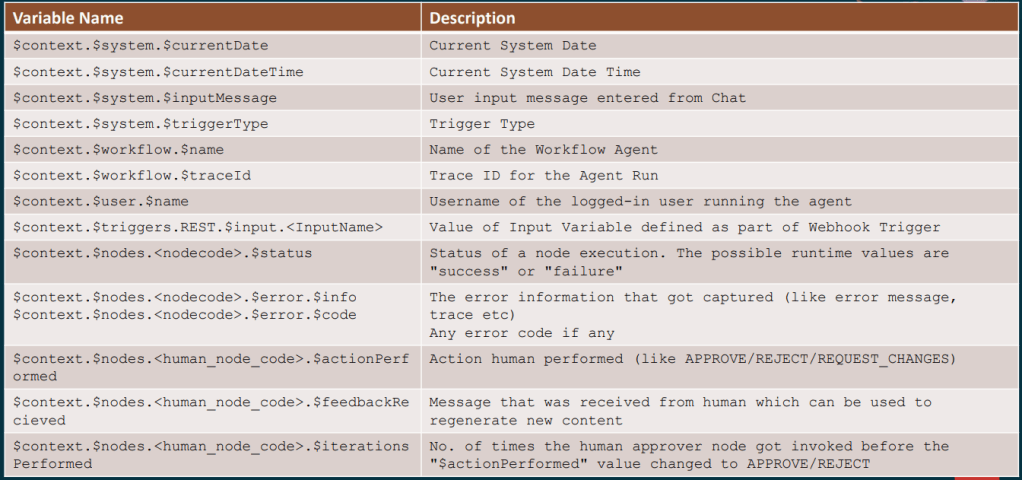

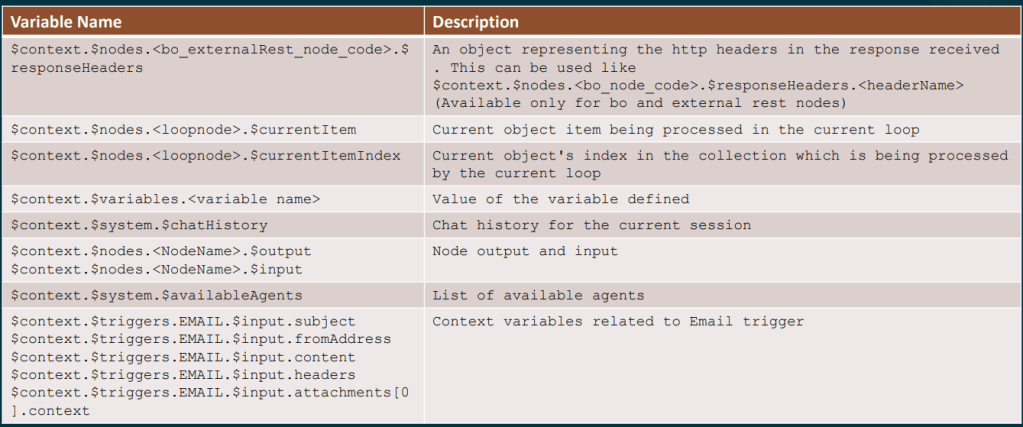

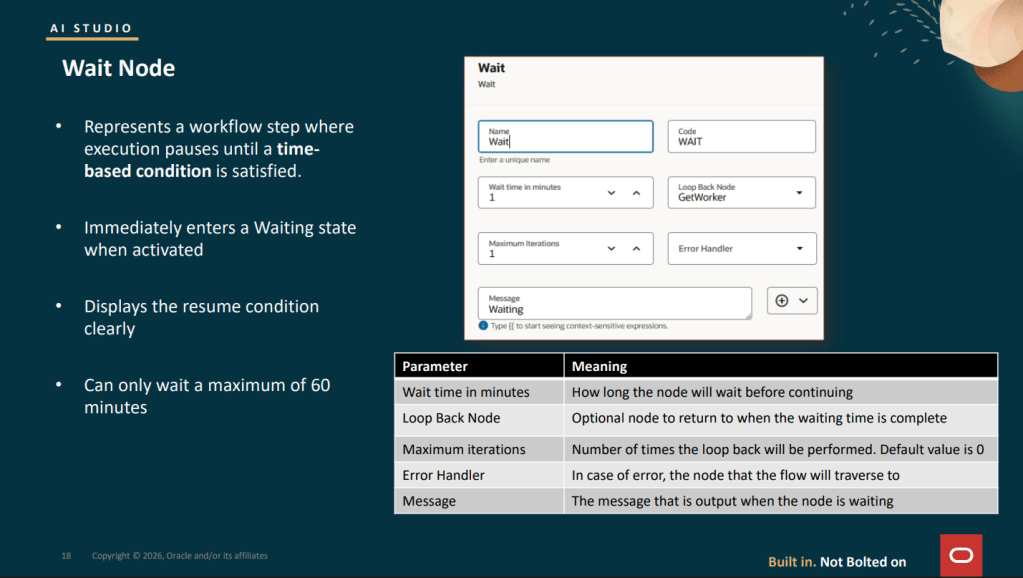

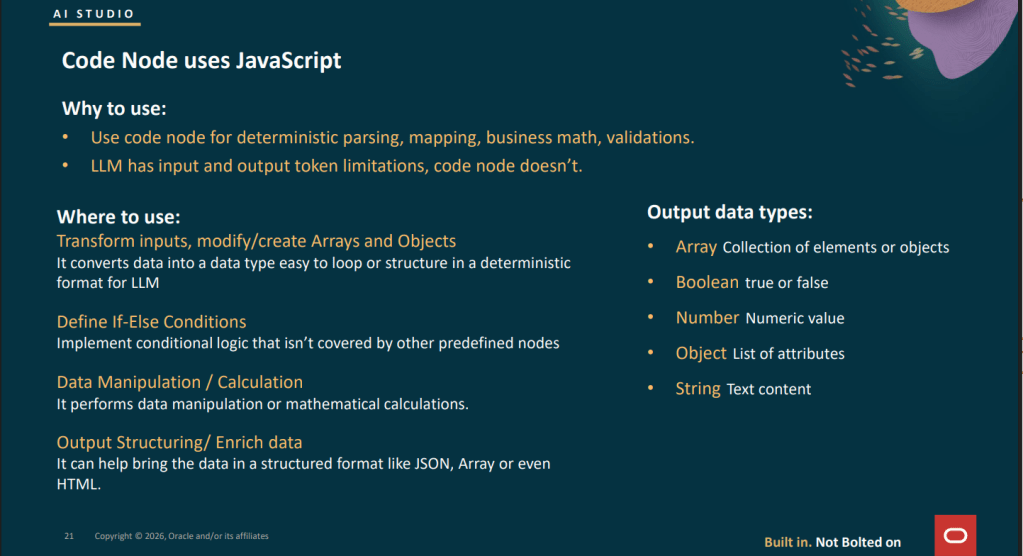

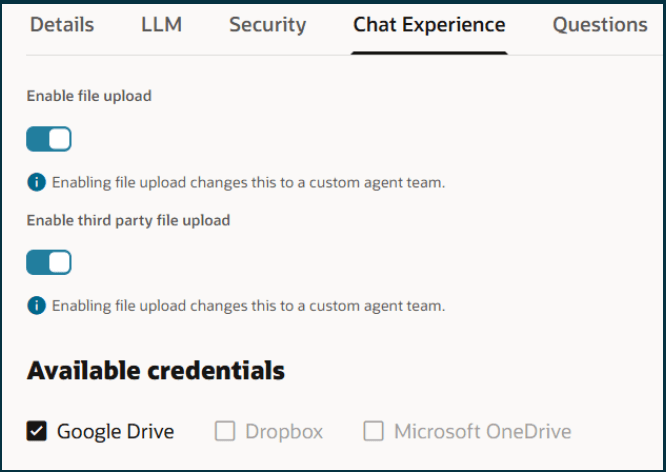

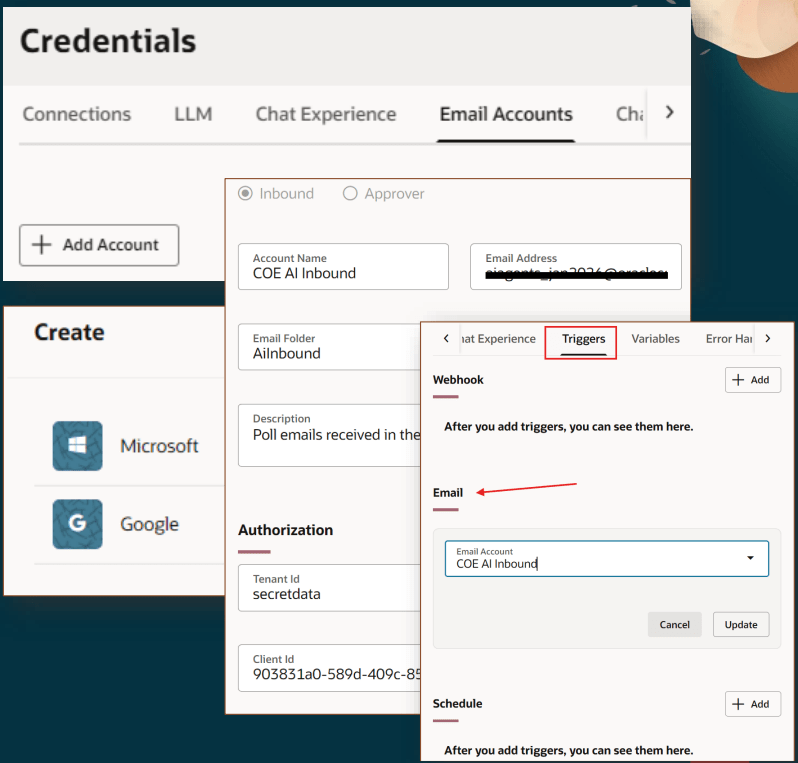

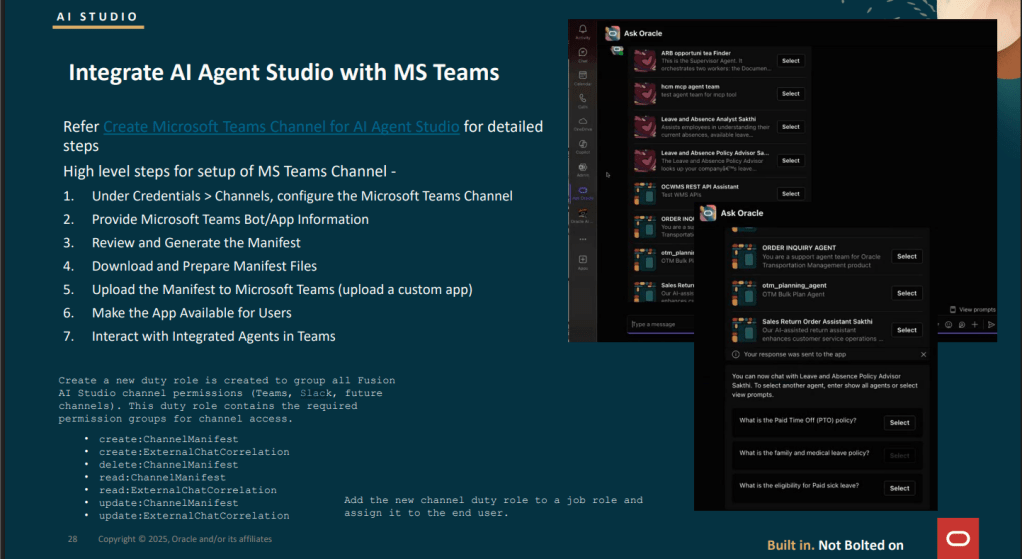

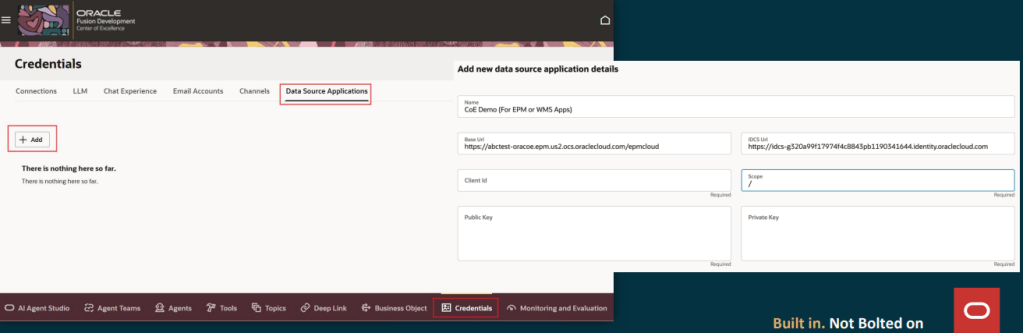

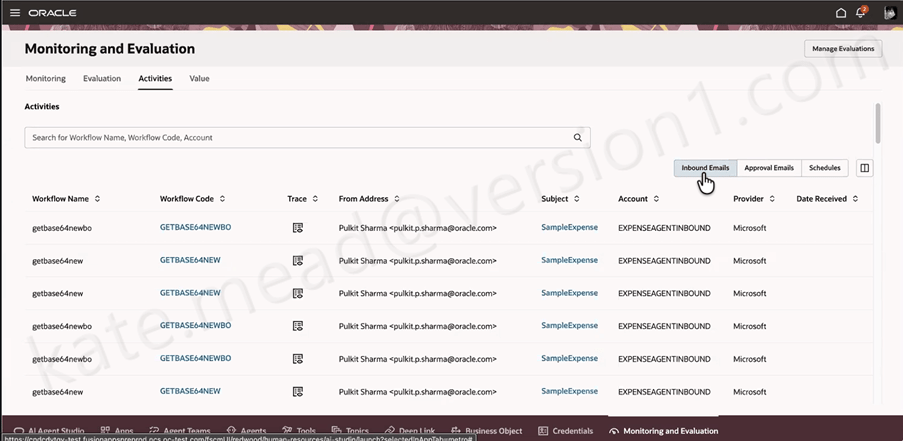

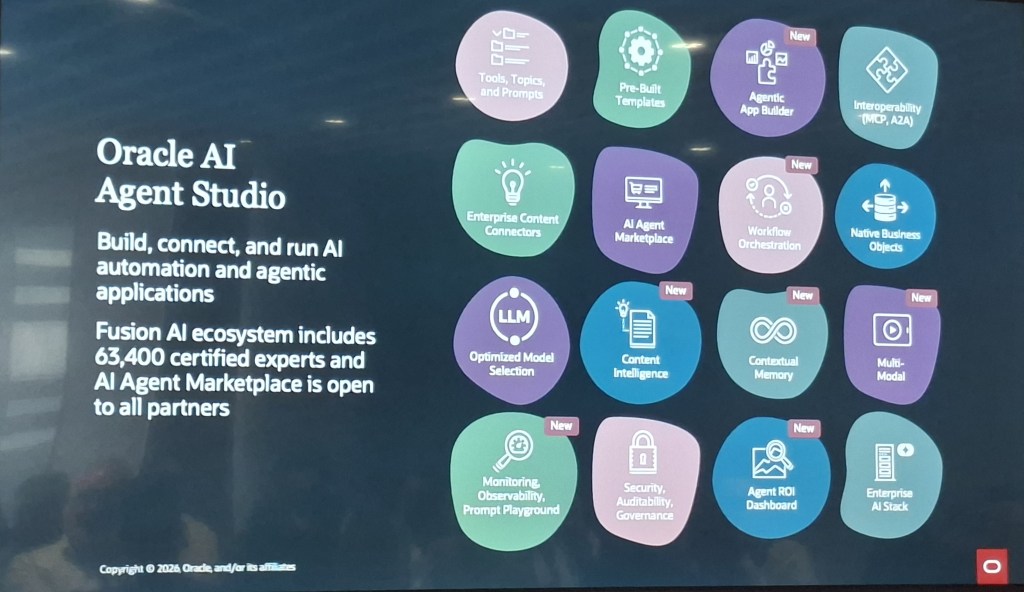

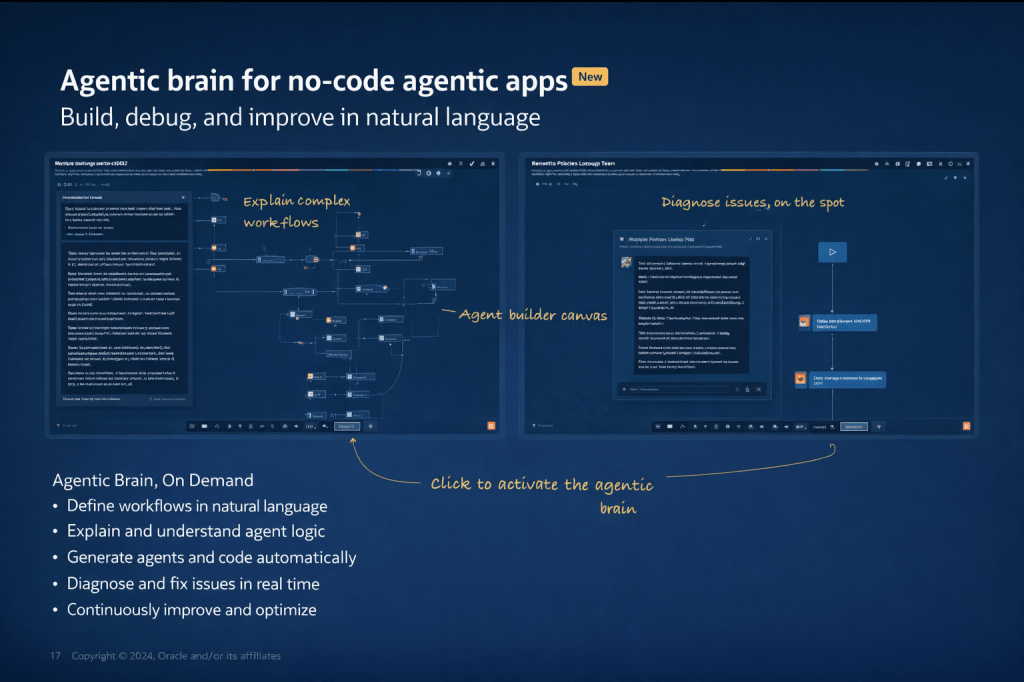

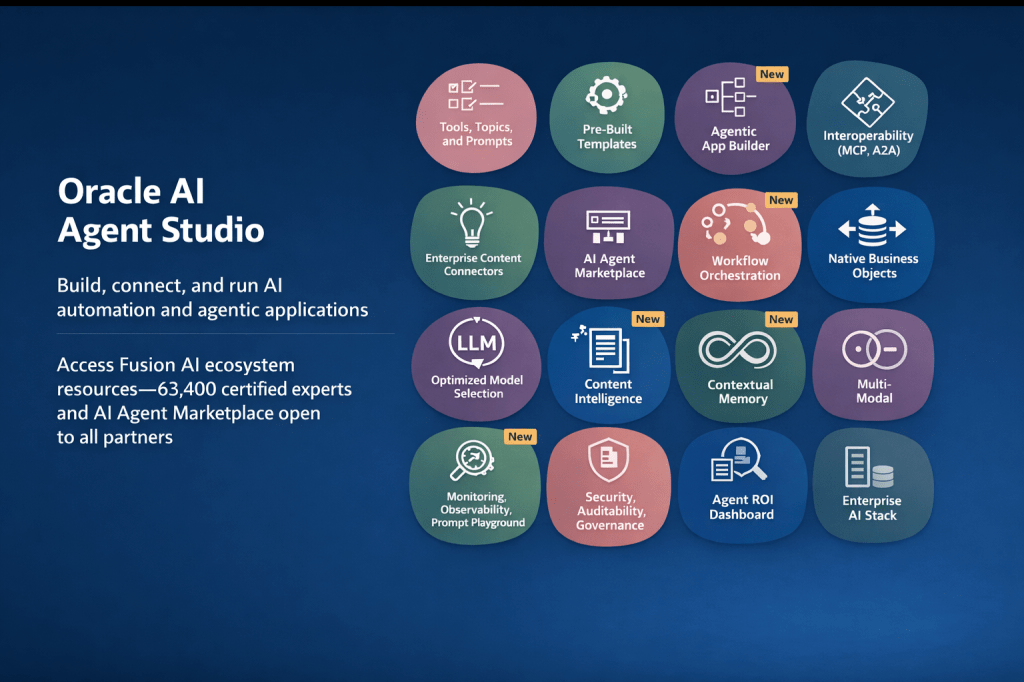

I’ve written in detail about Oracle AI Agent Studio in previous posts, but the webinar highlighted several new capabilities that are worth calling out specifically, because some of them genuinely change what’s possible for teams building custom agentic applications.

The most significant new addition is the Agentic App Builder, which is released in 26C. This is what Oracle describes as a “no-code agentic brain”: you describe your objective in natural language, the system explains and builds the workflow, generates agents and the underlying code automatically, and allows you to diagnose and fix issues in real time. In the demo, a user types a description of a sales opportunity health and risk management app, and within moments a structured agentic application is assembled from reusable agents, with a Deal Summary Agent, a Risk Agent, a Customer Insights Agent, and a Process Agent already in place and connected. It’s a significant step forward from the existing builder experience.

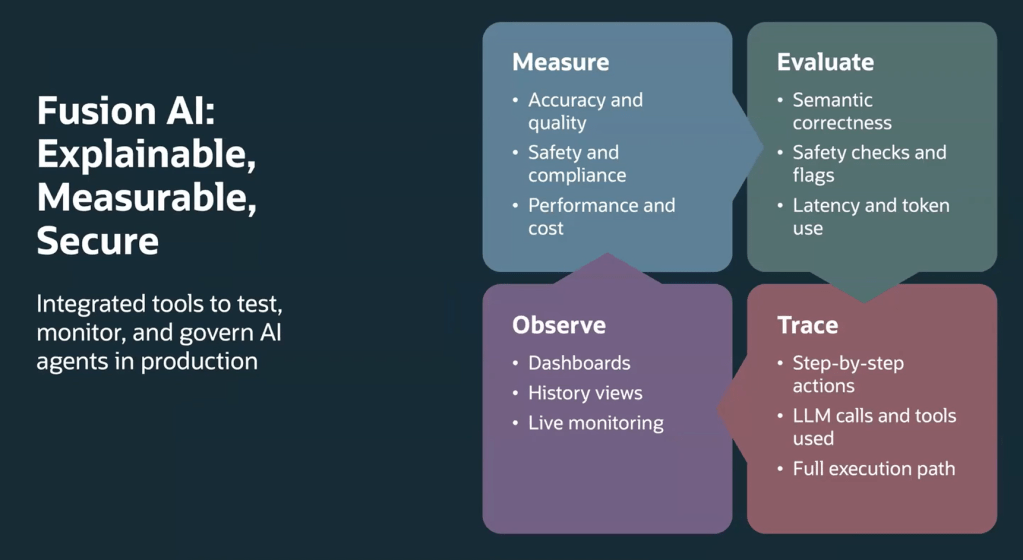

Alongside this, several other capabilities have been marked as new in the current release: Workflow Orchestration, Content Intelligence, Contextual Memory, Multi-Modal support, an Agent ROI Dashboard, and enhanced Security, Auditability, and Governance controls. Contextual Memory is worth paying attention to particularly, because it allows agents to retain information across interactions, which is what enables genuinely personalised, continuous support rather than stateless responses to each individual query.

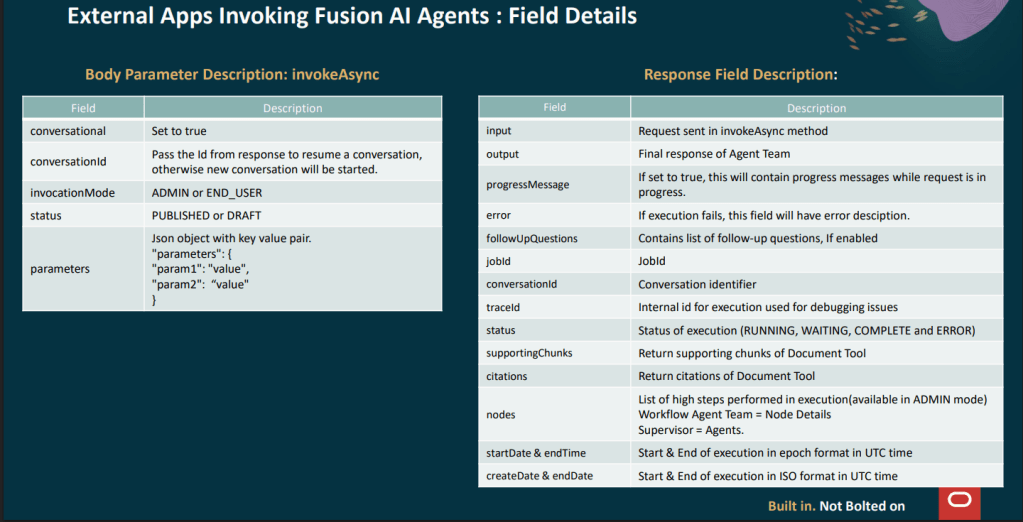

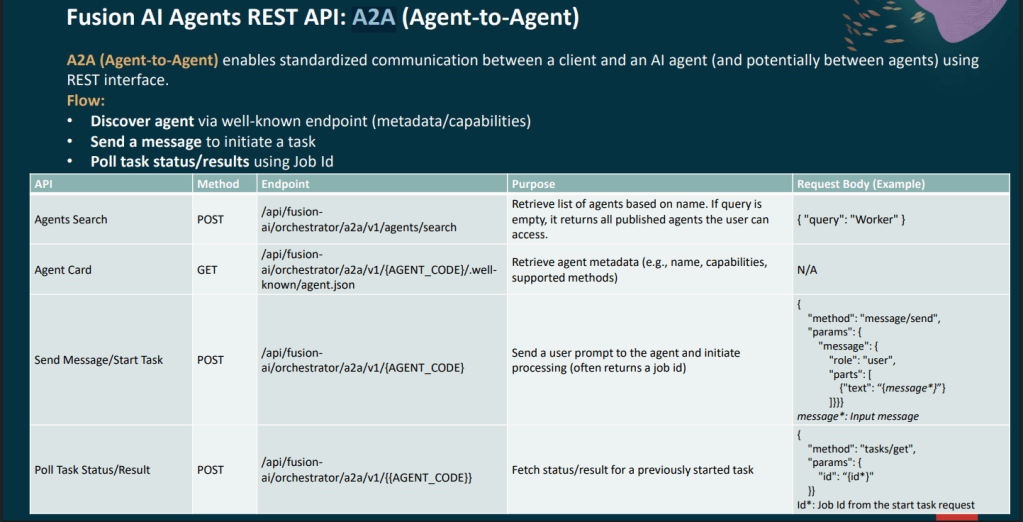

The studio now also supports full interoperability through MCP (Model Context Protocol) and A2A (Agent-to-Agent) protocols, which means agents built in Fusion can exchange context with agents or tools running outside the Fusion estate, provided the appropriate governance controls are in place.

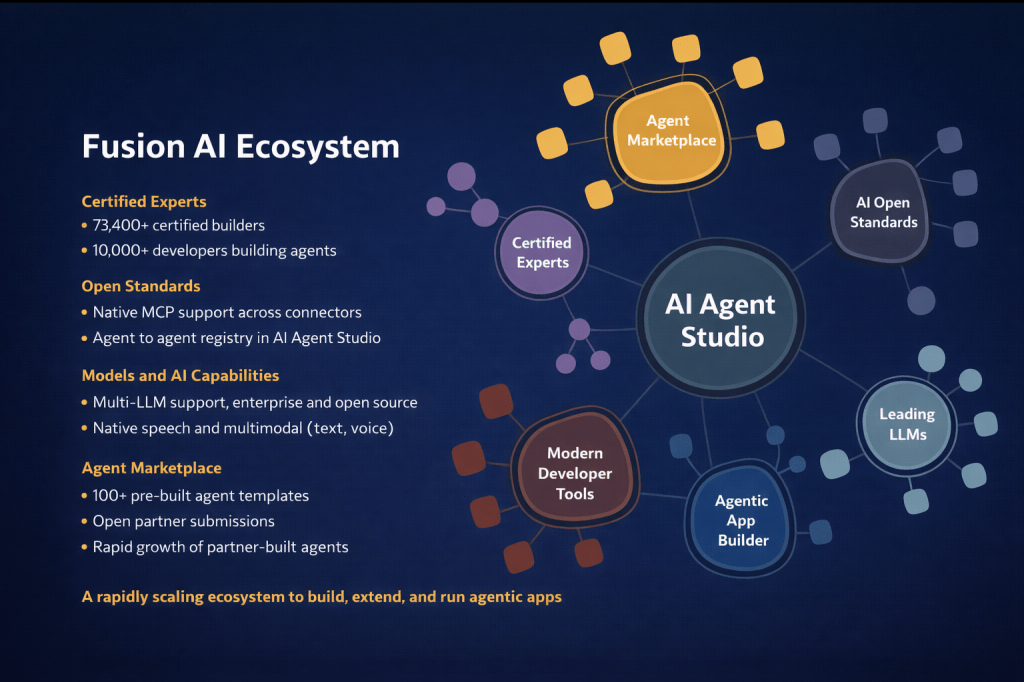

One thing the webinar made very clear is that Oracle isn’t building this alone. The Fusion AI ecosystem now includes 73,400 certified builders, 10,000 developers actively building agents, and over 100 pre-built agent templates in the AI Agent Marketplace, which is now open to all partners for submissions. Open standard support includes native MCP integration across connectors and an agent-to-agent registry within Oracle AI Agent Studio itself.

For customers, this matters because it means the pool of available agents and expertise is growing rapidly. You don’t need to build everything from scratch, and you don’t need to rely solely on Oracle to extend the platform. The open partner submission model for the marketplace is a meaningful shift, and it’s one that will accelerate the availability of domain-specific and industry-specific agents over the coming months.

The summary that Oracle closed with is a useful way to frame internal conversations: Fusion is moving from systems of record to systems of outcomes. Agentic Applications get work done. Oracle AI Agent Studio lets you build, deploy, and scale agents specific to your organisation. OCI AI Advantage runs it all securely at scale.

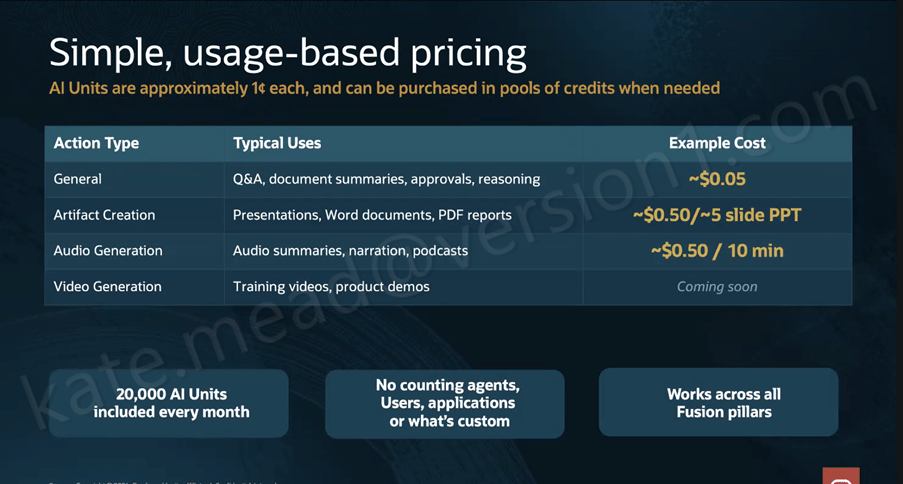

What I’d encourage any Fusion customer to take from this is that the window to start is now. The pricing model has already been simplified significantly (covered in my earlier post), the tooling to build and extend has matured substantially, and the evidence base from production deployments is solid. Starting with one application in one process area, positioned at Human in the Loop on the autonomy dial, is a low-risk, high-value entry point that builds organisational confidence while delivering measurable results.

If you’re thinking about where to start or how to make the case internally, I’m happy to talk it through. In the meantime, why not check out my earlier post on the HCM-specific Agentic Applications announced at AI World London? You can find it here. And if you missed the original announcement post covering the architecture, the maturity model, and the updated pricing, that’s a useful starting point too, and you can find it here.

Please note all screenshots are the property of Oracle and are used according to their Copyright Guidelines